AI Literacy Quick Start

Start today, form the habit

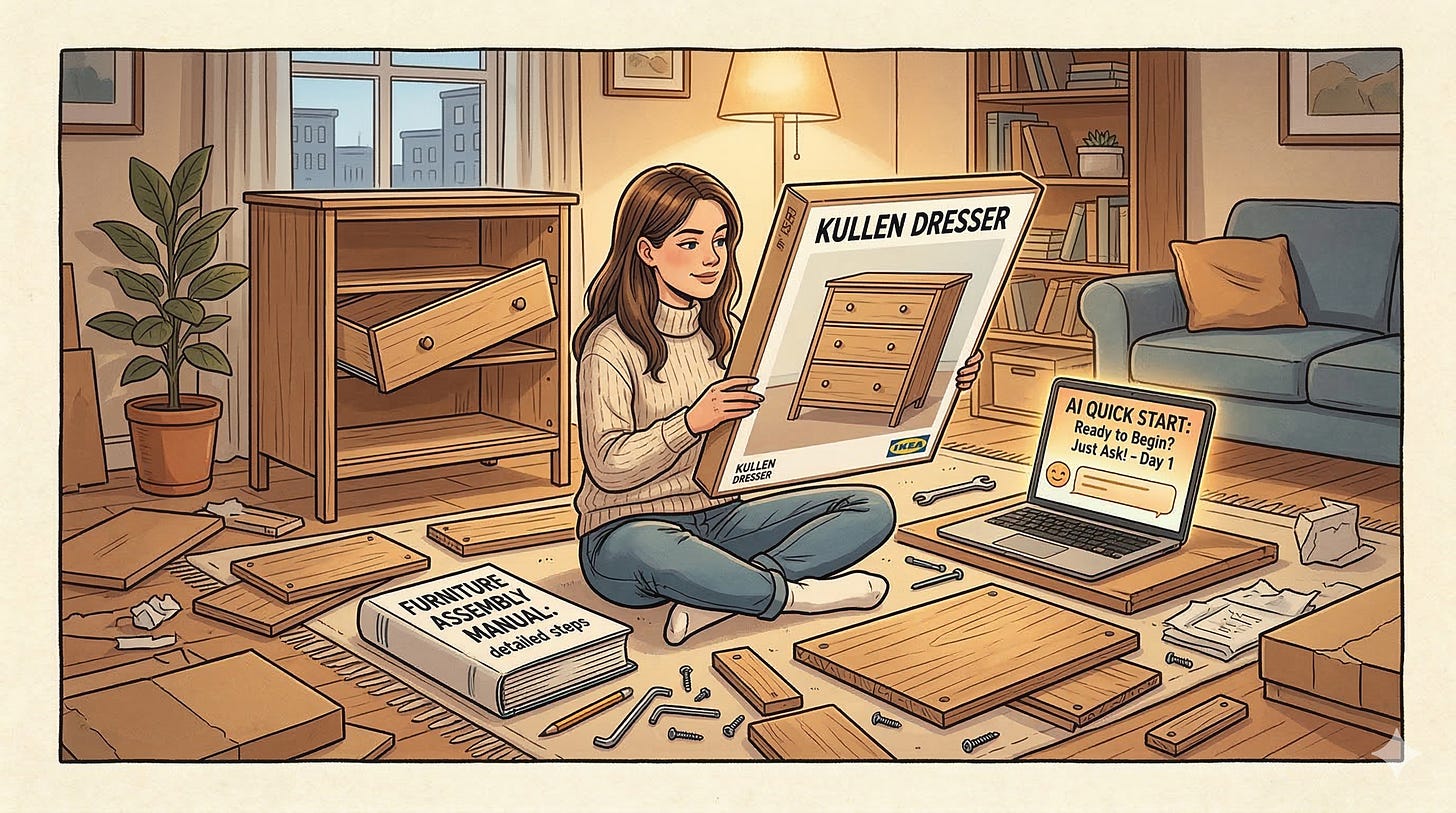

Think of this document as the picture on the box you are going to use to build your newly purchased IKEA furniture, because nobody’s got time to read the full instruction manual (until the damn drawers don’t close).

The furniture will look like furniture but the drawers may not close correctly.

Once you get tired of that soft close drawer that still won’t close after three frustrating attempts at assembling it without the step-by-step instructions, the 30-day plan is waiting for you.

Today: Three Things, 45 Minutes

This guide reflects the AI landscape as of 2026. The principles hold over time. Verify that specific tools mentioned are still the best options available when you read this.

1. Understand one idea (10 minutes)

AI does not look up answers. It predicts the most likely response based on patterns in its training data. That means it can be completely wrong while sounding completely certain. Keep that in mind for everything that follows.

Here is the simplest way to see the difference:

Spreadsheet: Formula → Calculation → Fact. AI: Prompt → Pattern Match → Prediction.

One is a machine. The other is an educated guess. A very fast, very confident, sometimes very wrong educated guess.

The Street Rule: If you wouldn’t bet $20 on the AI’s answer being right, don’t hit send until you’ve checked a credible source.

2. Talk to it (20 minutes)

Go to claude.ai, gemini.google.com, copilot.microsoft.com, or chat.openai.com and create a free account. Crucial: use only public or fictional information today. Never paste confidential work data, proprietary information, or personal secrets into these free tools. Ask it to explain your job to a 10-year-old or ask it something completely absurd. The goal is to see how it handles a conversation and break the magic box myth.

3. Find one hallucination (15 minutes)

This exercise only works if you pick the right kind of question. Do not ask who won a major sporting event or what the capital of a country is. Those are too easy and the AI will likely get them right, which teaches you nothing.

Instead, force it to guess. Ask it to summarize a niche book you love in detail. Ask it to list three legal precedents for a very specific and obscure scenario. Ask it about a local regulation or a specialist in your field. These are the cracks where hallucinations live.

Ask the same question three times, each with slight adjustments to the wording. Notice how the responses differ. Then verify at least one specific claim against a real source. At least one detail will likely be wrong. Write it down. This teaches two things at once: AI makes errors, and its answers are not fixed facts. They are predictions.

AI output is a starting point, not a final answer. Verify before you act.

The One Rule That Covers Everything

If you forget everything else, remember this: your spreadsheet gives you the same answer every time. AI gives you a prediction. Those are not the same thing. The gap between them is where your judgment lives.

Still Got a Drawer That Won’t Close?

Is your Swedish furniture fully assembled and do the drawers soft close as advertised? If not, the 30-day plan is waiting for you. It covers hallucination detection, prompting techniques, workflow integration, validation frameworks, agent awareness, and how to audit AI output before it causes problems.

The Lowe Down

Action over Procrastination: Do something today, not someday. Forty-five minutes. Three things. That is progress toward closing the new literacy gap.

Judgment over Automation: Artificial Intelligence (AI) is a powerful co-pilot, but you are still the captain. If you outsource your critical thinking to a prediction engine, you are not saving time; you are compounding risk.

It’s a no brainer.

If you found value in this guide, share it with your colleagues, your HR department, or your IT team. Help the next generation develop a new literacy. The Convergence Divide closes one person at a time.

Developed by Kris Lowe, author of Lowe Intelligence: It’s a no brainer. Kris holds a Master of Arts in Education & Instructional Technology from Virginia Tech, AI certifications from Google and Microsoft, CompTIA Security+, and Prosci Certified Change Practitioner, along with over 30 years of experience in enterprise technology and digital transformation.